Using generative AI in the workplace checklist: Free template

Start a new document with this content. Open the editor to build from scratch — paste in what you need and keep writing.

Using generative AI workplace checklist

This checklist helps your company manage the responsible use of generative AI tools, addressing key risks like data privacy, intellectual property, and cybersecurity. By following each step, you'll ensure compliance with regulations, protect sensitive information, and avoid issues like biased or inaccurate outputs. This structured approach keeps your organization secure and ready for future changes in AI technology.

How to use this using generative AI workplace checklist

Here’s how to get the most out of this generative AI workplace checklist:

- Follow the process: This checklist guides you through the key areas of concern when using generative AI tools in your workplace, from data security to managing legal risks. Use it to ensure that every important aspect is addressed.

- Tailor to your business: Adjust the checklist to match your organization’s policies and specific uses of generative AI tools. This will ensure it aligns with your business’s goals, legal obligations, and operational needs.

- Engage relevant departments: Collaborate with IT, legal, HR, and other key departments to review the checklist and ensure everyone is on the same page regarding generative AI use. Their involvement is crucial to managing compliance and mitigating risks effectively.

- Track and document progress: As you work through the checklist, keep track of completed tasks and actions taken. This helps ensure accountability and creates a clear record of how generative AI tools are managed in your organization.

- Review regularly: Given the fast-paced changes in AI technology and regulations, make sure to review and update this checklist periodically to stay compliant with evolving legal requirements and industry standards.

Checklist

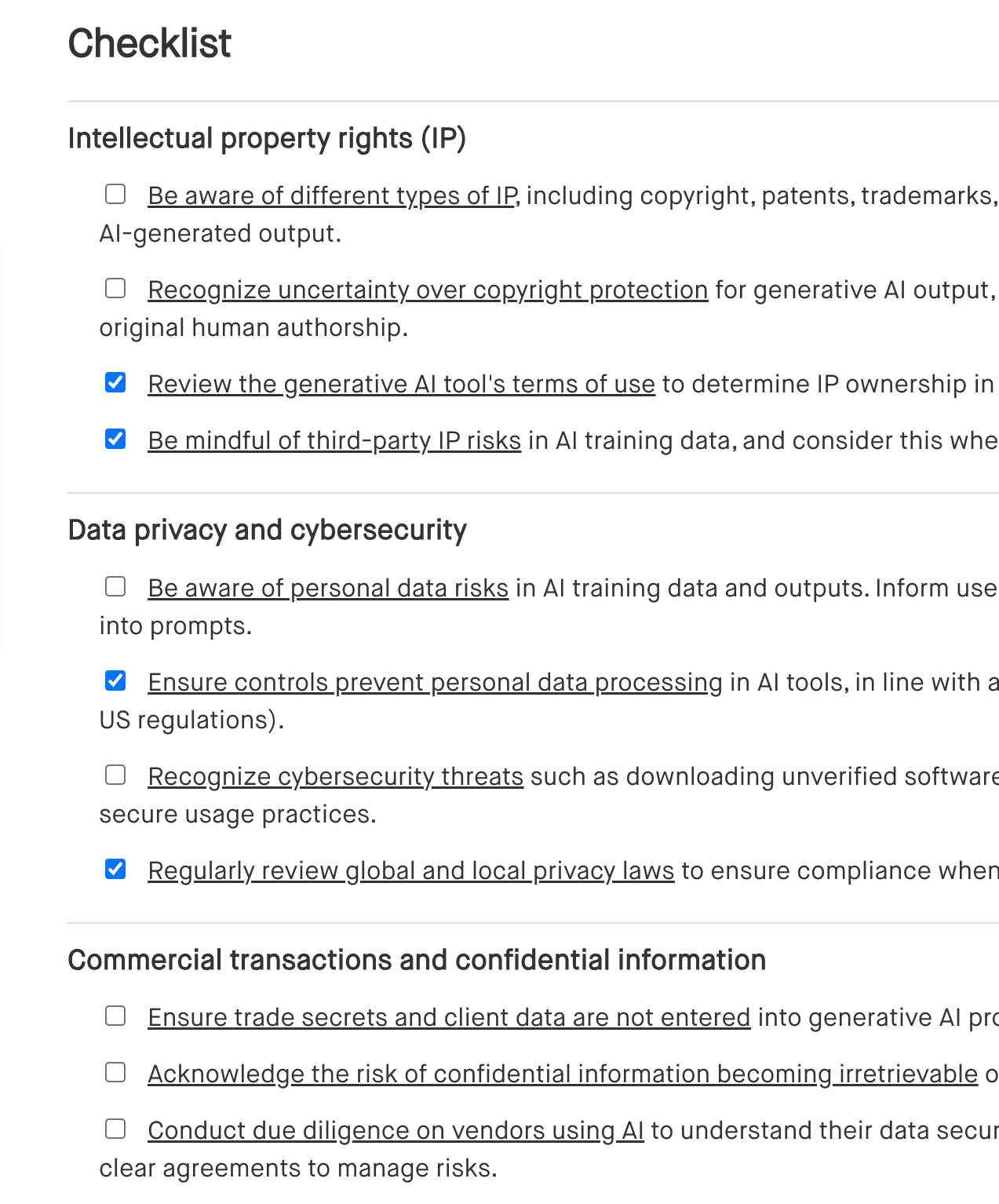

Intellectual property rights (IP)

[ ] Be aware of different types of IP, including copyright, patents, trademarks, and trade secrets that may apply to AI-generated output.

[ ] Recognize uncertainty over copyright protection for generative AI output, as copyright generally requires original human authorship.

[ ] Review the generative AI tool's terms of use to determine IP ownership in AI-generated content.

[ ] Be mindful of third-party IP risks in AI training data, and consider this when authorizing tools and use cases.

Data privacy and cybersecurity

[ ] Be aware of personal data risks in AI training data and outputs. Inform users not to enter personal information into prompts.

[ ] Ensure controls prevent personal data processing in AI tools, in line with applicable privacy laws (e.g., EU GDPR, US regulations).

[ ] Recognize cybersecurity threats such as downloading unverified software or mismanaging passwords. Promote secure usage practices.

[ ] Regularly review global and local privacy laws to ensure compliance when using generative AI tools.

Commercial transactions and confidential information

[ ] Ensure trade secrets and client data are not entered into generative AI prompts.

[ ] Acknowledge the risk of confidential information becoming irretrievable once used in AI training data.

[ ] Conduct due diligence on vendors using AI to understand their data security and confidentiality controls. Use clear agreements to manage risks.

Discrimination, bias and misinformation

[ ] Understand the potential for bias and misinformation in AI prompts and outputs due to training data.

[ ] Provide employees with clear usage instructions for generative AI systems. Discourage offensive, discriminatory, or misleading prompts.

[ ] Regularly review AI outputs for bias or misinformation linked to training data sources.

Risks with inaccurate outputs

[ ] Be aware of hallucinations—factually inaccurate but plausible-looking content from generative AI.

[ ] Recognize business risks from inaccurate content, including legal, reputational, or financial impacts.

[ ] Review all AI-generated output before use to identify and remove misleading or incorrect content.

Future developments and regulations

[ ] Monitor technological advances in generative AI to assess competitive and strategic value.

[ ] Track evolving government regulations impacting generative AI use in the workplace.

[ ] Pay attention to global frameworks like the EU AI Act and prepare for upcoming compliance requirements.

[ ] Align AI adoption strategies with regulatory obligations while promoting innovation.

Audit current use of generative AI

[ ] Conduct an internal audit of AI use across departments.

[ ] Identify use cases and integration points for generative AI in operations.

[ ] Create an inventory of tools used by employees and verify documentation.

[ ] Assess transparency around AI use across the organization.

[ ] Check whether AI-generated content is used in products or services, and assess related risks.

[ ] Evaluate legal and regulatory risks linked to existing AI usage.

[ ] Identify ethical or reputational risks arising from how AI is used.

[ ] Review internal safeguards and controls for generative AI risk management.

Consider the potential use of generative AI

[ ] Identify new opportunities for applying generative AI in future business areas.

[ ] Evaluate suitable AI tools for future workforce usage aligned with organizational goals.

[ ] Anticipate future compliance risks associated with increased AI adoption.

[ ] Reflect on ethical and reputational challenges from future AI use.

[ ] Plan appropriate controls to address future privacy, security, and ethical risks.

Manage legal and compliance risks

[ ] Decide whether to allow access only to specific generative AI tools or adopt a more flexible approach, blocking only certain prohibited applications.

[ ] Outline the permitted uses of generative AI tools within the organization to ensure appropriate application.

[ ] Assess what types of data can be safely input into generative AI tools, considering privacy, IP, and confidentiality.

[ ] Perform an overall risk assessment to identify which generative AI applications are suitable for your organization based on legal and compliance risks.

[ ] Create and enforce a company-wide policy that guides employees on how to appropriately use generative AI tools.

[ ] Ensure employees opt out of allowing prompt data to be used for training purposes. If no opt-out is available, include this factor in your risk assessment.

[ ] Put a system in place to rigorously review outputs from generative AI tools to ensure factual correctness and avoid issues like biased or discriminatory content and IP infringement.

[ ] Require employees to use work logins when accessing generative AI tools to ensure security and separation between business and personal use.

[ ] Educate the workforce on how to properly use generative AI tools, including understanding any limitations or restrictions.

[ ] Apply a thorough onboarding process to ensure generative AI vendors meet the company’s risk management requirements.

[ ] Verify that your organization’s insurance coverage is sufficient to cover generative AI use, including potential risks from outputs.

[ ] Inform customers and clients clearly about your use of generative AI tools, including in your privacy policies.

[ ] Include provisions in customer or client contracts to mitigate risks associated with generative AI outputs.

[ ] Ensure that disclaimers are attached to generative AI outputs before they are used internally or shared externally.

[ ] Perform PIAs where necessary to ensure compliance with legal requirements regarding data protection and privacy when deploying generative AI tools.

[ ] Monitor how employees use generative AI tools, and consider conducting a PIA before introducing new types of monitoring.

Implement appropriate policies

[ ] Implement a generative AI use policy and set clear rules for using generative AI tools in the workplace.

[ ] Review existing workforce policies and make sure they cover related areas, such as information security, IT resources and communications systems, bring your own device (BYOD) policy, personal information protection, and diversity and inclusion.

[ ] Implement missing policies where necessary.

[ ] Review policies at least once a year to keep up with the rapid pace of AI development.

[ ] Ensure policies promote secure, responsible, and ethical use of generative AI, without stifling creativity or productivity.

[ ] Create a separate policy for internal AI use, similar to a code of conduct or ethics statement.

[ ] AI use in recruitment, appraisals, and promotions.

[ ] The development and use of AI tools, including generative AI, within your company.

[ ] The integration of AI in your services or products.

[ ] Contractual liability with third parties regarding the use of AI.

Vendor onboarding considerations

[ ] Clearly define the intended use of the generative AI tool before onboarding.

[ ] Identify specific business goals or outcomes you want to achieve by using the tool.

[ ] Determine if the tool poses risks related to confidential business information, intellectual property, or data protection.

[ ] Check for potential IP infringement risks, especially if the training data includes third-party content used without permission.

[ ] Consider cybersecurity risks related to the tool’s integration and usage.

[ ] Ensure compliance with ethics-related considerations, including potential biases in the tool's outputs.

[ ] Obtain a vendor warranty that the training data used for the generative AI system is publicly available without restrictions.

[ ] Implement internal controls to manage risks, including:

[ ] Establishing a clear generative AI use policy.

[ ] Providing training to the workforce on the responsible and secure use of the AI tool.

[ ] Ensuring compliance with broader organizational policies, such as IT security, confidentiality, and data governance.

[ ] Investigate the vendor’s credentials, system security, data practices, and compliance with AI regulations.

[ ] Clarify ownership and usage rights over generative AI prompts, training data, and resulting outputs.

[ ] Determine whether the AI tool functions as a closed system or is used for training third-party models.

[ ] Implement a system to document all inputs, outputs, and errors generated by the generative AI tool, especially if the system does not automatically store this data.

Benefits of using a generative AI workplace checklist

A generative AI workplace checklist helps you use AI tools effectively while minimizing risks. Here’s how it helps:

- Ensure compliance: It helps you stay aligned with legal, regulatory, and ethical standards when using generative AI, ensuring your company complies with relevant laws and avoids penalties.

- Reduce risks: By following the checklist, you can reduce the likelihood of data breaches, intellectual property issues, or biased AI outputs, protecting your business from costly errors.

- Protect confidential information: It guides employees on how to handle sensitive data carefully when using AI tools, helping prevent exposure of trade secrets or customer data.

- Streamline processes: The checklist provides a clear, organized approach for managing AI use in the workplace, ensuring consistency and reducing confusion across your team.

- Foster responsible AI use: It promotes ethical and secure use of generative AI, ensuring your organization leverages AI's capabilities while maintaining integrity and safeguarding business operations.

Frequently asked questions (FAQs)

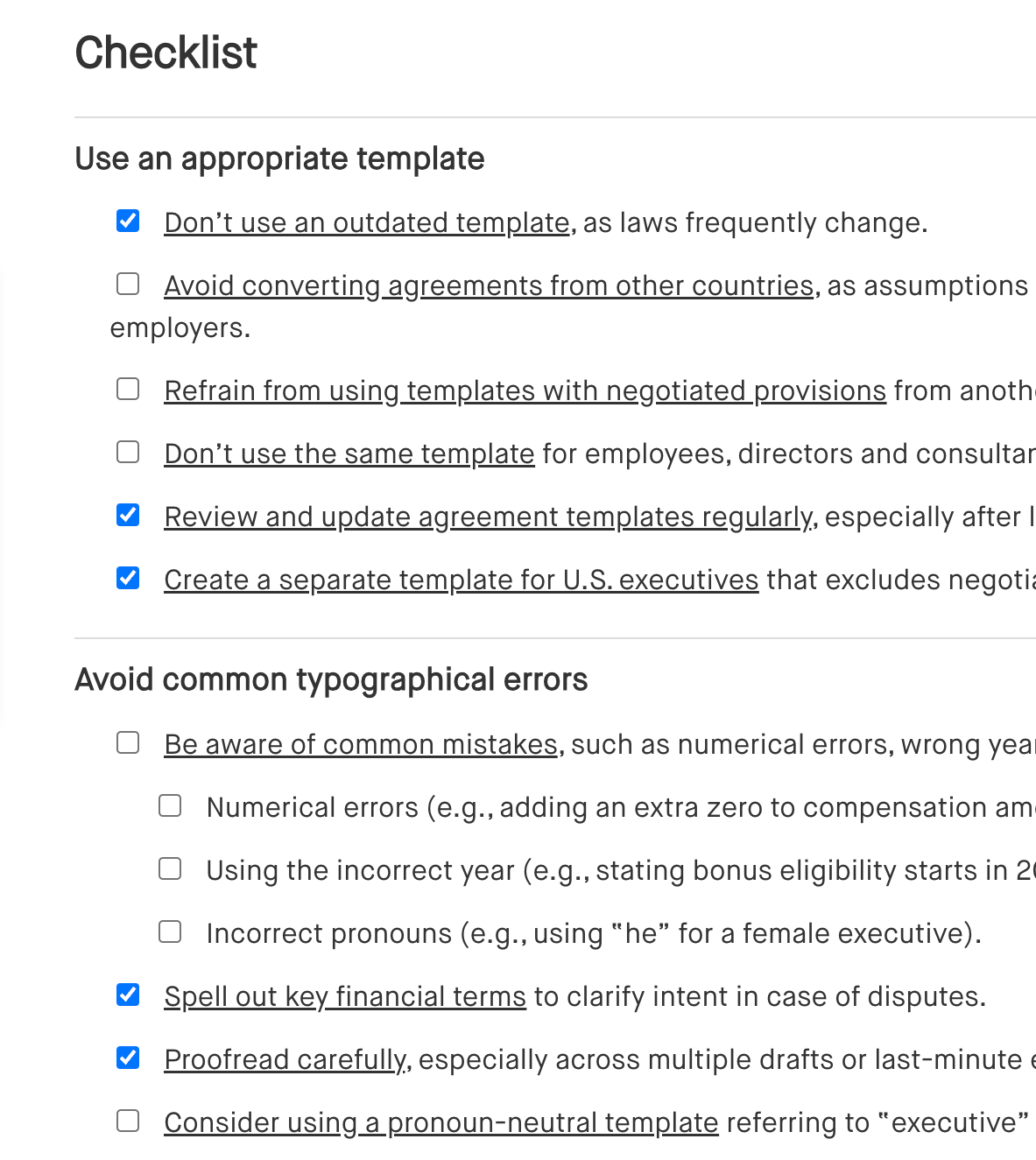

Outlines key components for drafting an executive employment agreement, including compensation, benefits, responsibilities, and termination terms.

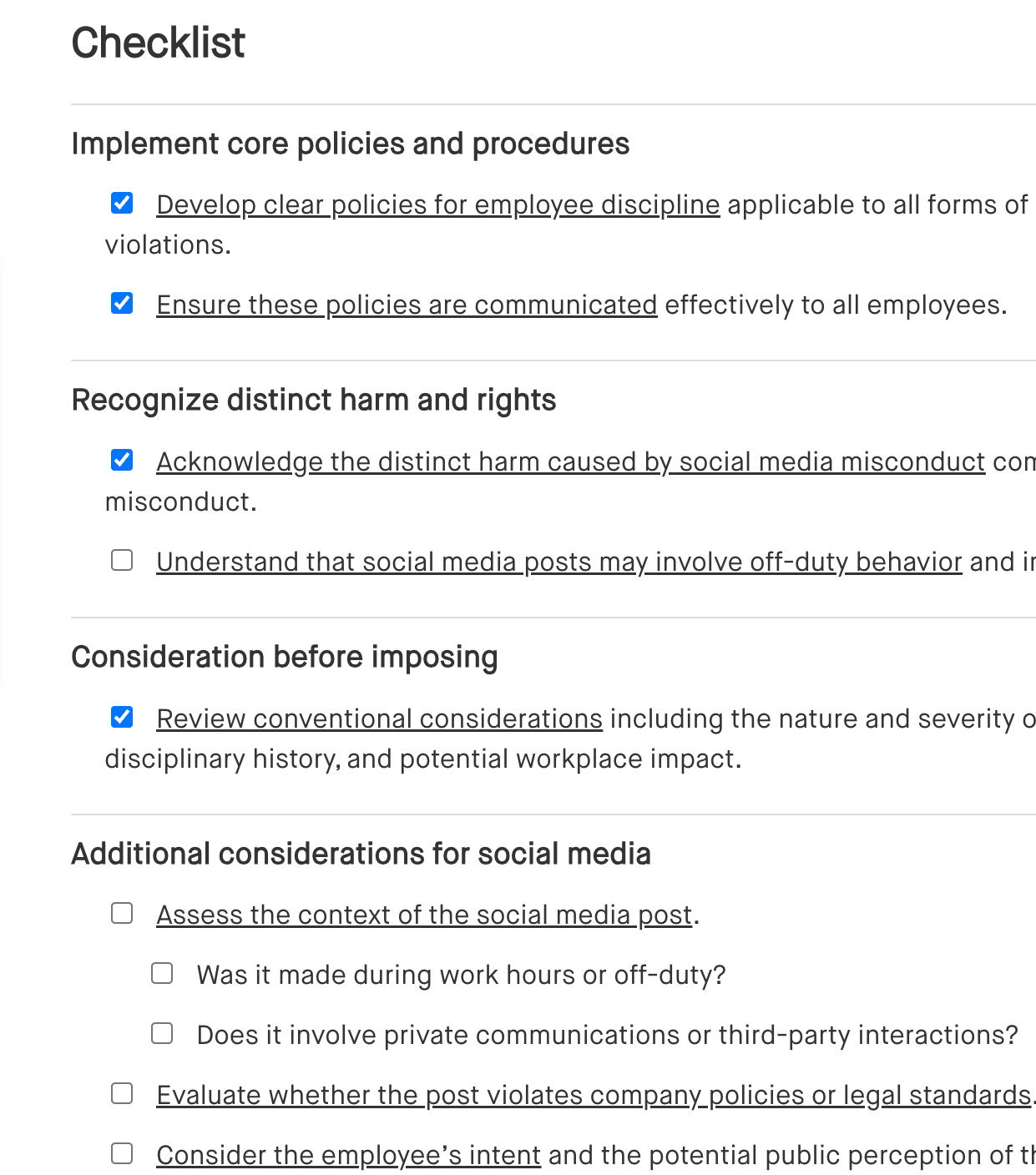

Identifies steps for addressing employee misconduct on social media, including reviewing the content, assessing impact, and determining appropriate disciplinary action.

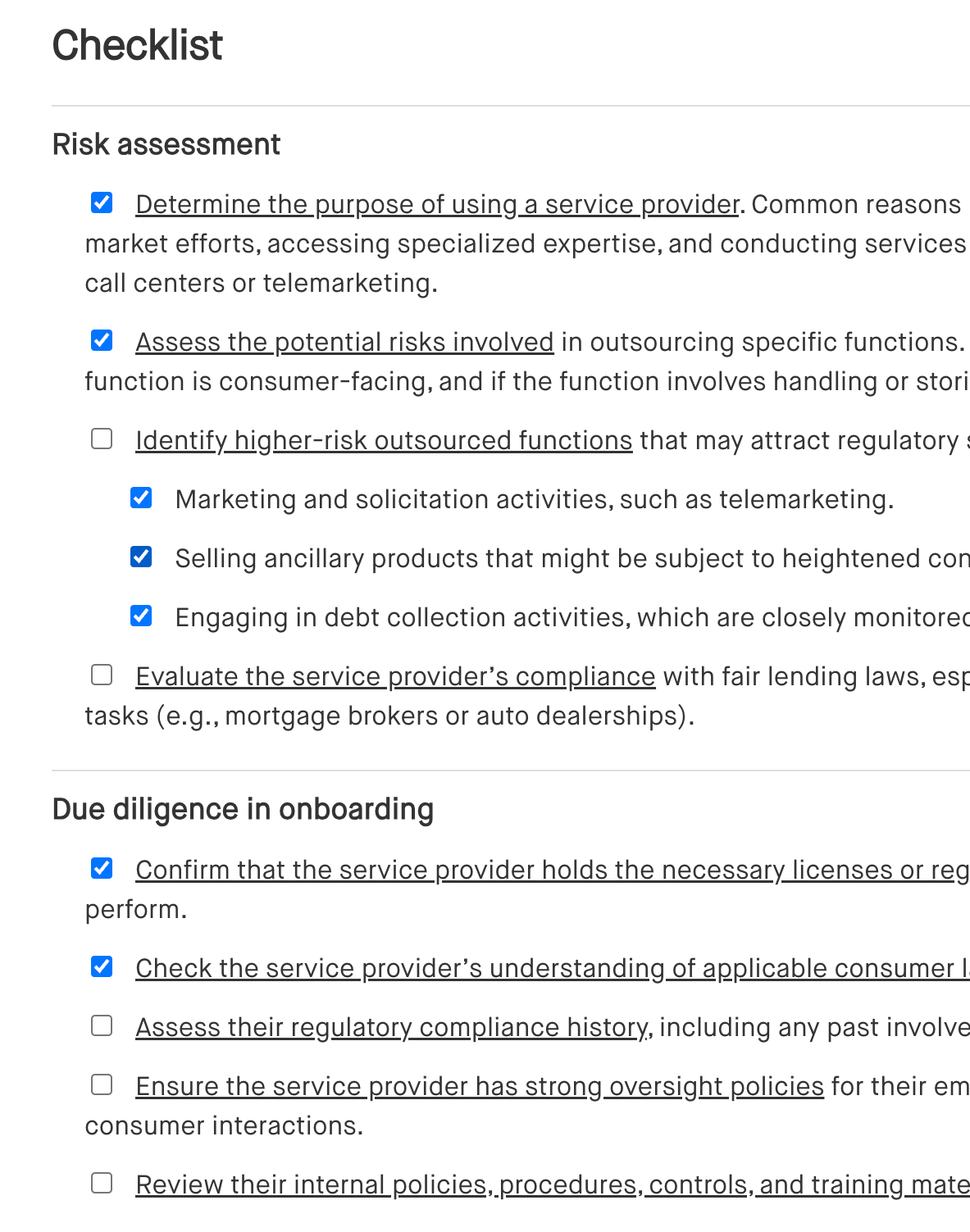

Covers essential steps for managing vendors, focusing on evaluating performance, maintaining clear contracts, and monitoring ongoing risk factors.